|

8/6/2023 0 Comments Airflow docker compose

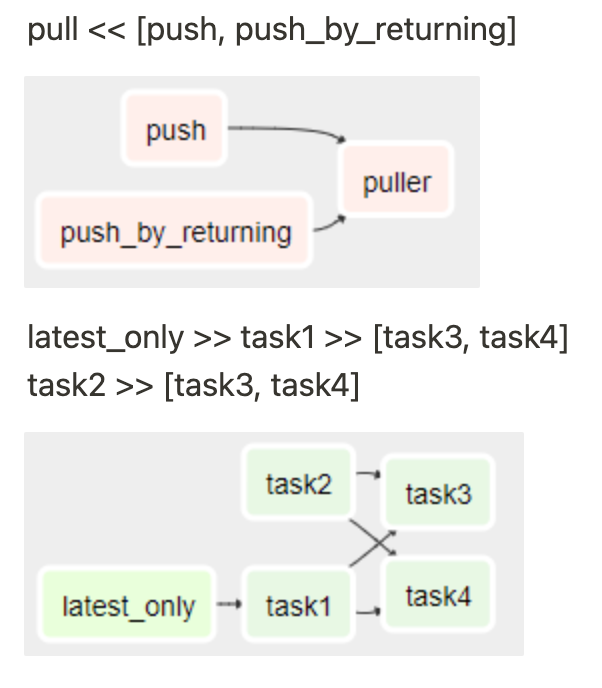

Note: More information on the different kinds of executors can be found here. In our case, we make use of the LocalExecutor. Most importantly, the kind of executor Airflow we will utilize. The above variables set the database credentials, the airflow user, and some further configurations. env file with the following content:ĪIRFLOW_CORE_FERNET_KEY=UKMzEm3yIuFYEq1圓-2FxPNWSVwRASpahmQ9kQfEr8E=ĪIRFLOW_CORE_DAGS_ARE_PAUSED_AT_CREATION=True Still, inside your Airflow folder create a. However, to complete the installation process and configure Airflow properly, we need to provide some environment variables. We successfully created a docker-compose file with the mandatory services inside. Most importantly the scheduler, the webserver, the metadatabase (PostgreSQL), and the airflow-init job initializing the database.Īt the top of the file, we make use of some local variables that are commonly used in every docker container or service. The above docker-compose file simply specifies the required services we need to get Airflow up and running. Mkdir -p /sources/logs /sources/dags /sources/pluginsĬhown -R "$ var/run/docker.sock:/var/run/docker.sockĬondition: service_completed_successfully Just navigate via your preferred terminal to a directory, create a new folder, and change into it by running: We start nice and slow by simply creating a new folder for Airflow. Simply head over to the official Docker site and download the appropriate installation file for your OS. Since we will use docker-compose to get Airflow up and running, we have to install Docker first. Now that we shortly introduced Apache Airflow, it’s time to get started. Airflow Webserver - provides the main user interface to visualize and monitor the DAGs and their results.Ī high-level overview of Airflow components Step-By-Step Installation.Airflow Worker - picks up the tasks and actually performs the work.Airflow Scheduler - the “heart” of Airflow, that parses the DAGs, checks the scheduled intervals and passes the tasks over to the workers.

If a job fails, we can only rerun the failed and the downstream tasks, instead of executing the complete workflow all over again.Īirflow is composed of three main components: Moreover, we can split a data pipeline into several smaller tasks. This approach allows us to run independent tasks in parallel, saving time and money. ApacheĪirflow also has a web interface for monitoring and visualizing the status of workflows, as well as a flexible architecture that allows it to integrate with a wide range of third-party tools and services. A DAG typically consists of several tasks or actions that need to be executed in a specific order. It allows users to define and execute complex workflows as Directed Acyclic Graphs (DAGs) and provides a rich set of features for managing dependencies, parallelism, and task execution. Why Airflow?Īpache Airflow is an open-source platform used to programmatically author, schedule, and monitor workflows. You can find the full codes for this post on my GitHub. In this post, we will create a lightweight, standalone, and easily deployed Apache Airflow development environment in just a few minutes.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed